Clay API Limits, Authentication & Rate Limits Explained

Your enrichment workflow ran perfectly on 50 test records. Then you pointed it at 10,000 contacts and everything collapsed: 429 errors, duplicate CRM writes, and a credit balance that evaporated overnight. The Clay API usually is not the root cause. The root cause is treating authentication, rate limits, and quota ceilings as afterthoughts instead of design constraints.

This piece is for RevOps teams, growth engineers, and sales ops builders who use (or plan to use) the Clay API for enrichment, outbound personalization, or CRM hygiene automations. You will walk away with a production-ready mental model for Clay API authentication, what Clay API limits look like under real load, and the integration patterns that keep workflows reliable without constant monitoring. This is not a reprint of the Clay API docs. It is the practical layer on top, based on integration work and the failure modes that show up at scale.

Clay API Basics: The Mental Model That Matters

Clay sits as a workflow orchestration layer for sales tech, connecting enrichment providers, research tools, and outbound channels into composable sequences. The Clay API is how you trigger and control those sequences from your own systems (a CRM webhook, a form-fill event, a nightly batch job). An API here is simply a structured interface for your code to send requests and receive data from Clay's platform.

Common GTM use cases include enriching inbound leads on form submission, cleaning stale CRM records, building prospect lists from intent signals, and personalizing outbound sequences. Clay's HTTP API enrichment lets you connect to any external API (even those without a native Clay integration) using standard methods like GET, POST, PUT, or DELETE. In practice, integrations break on three surfaces: endpoints, authentication, and limits. Limits tend to surface first, especially when one workflow fans out into many calls.

Authentication That Won't Bite You Later

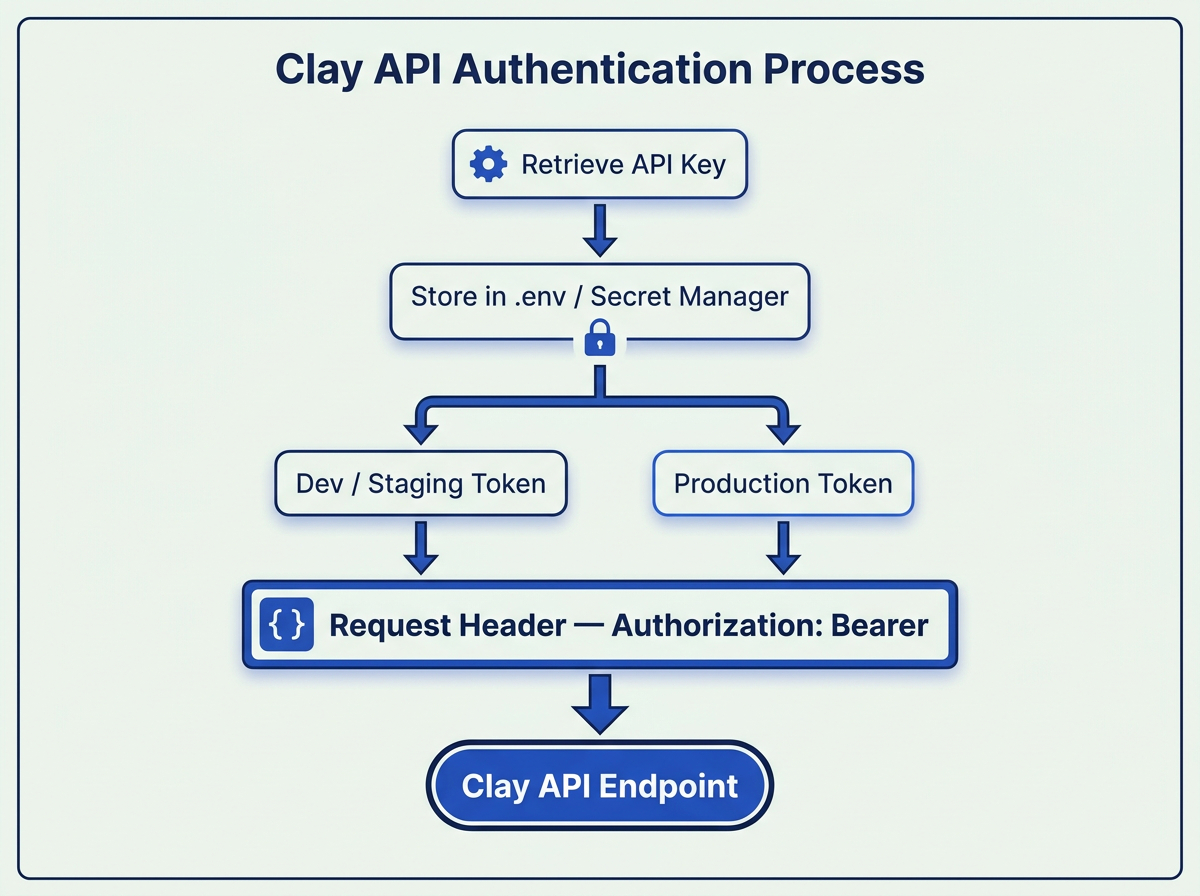

People use "Clay API key" and "Clay API token" interchangeably, but the distinction matters operationally. Your Clay API key is the credential you retrieve from your account settings: navigate to Settings and locate the "API key" section under the Account tab. This key authenticates requests to Clay's own endpoints. A Clay API token, in broader usage, can refer to any bearer or session credential you store inside Clay for calling third-party APIs. Clay allows you to store authentication credentials securely at the workspace level for reuse across enrichments, so you're not pasting raw keys into every request.

Separate tokens for dev and production prevent a single revocation from breaking everything.

Security and Rotation Hygiene

Never ship a Clay API key in client-side code or commit it to a repository. For prototypes, a .env file works. For production, use a secret manager (AWS Secrets Manager, HashiCorp Vault, even 1Password CLI). Rotate keys on a schedule, not just after incidents. A zero-downtime rotation pattern: deploy the new key to your worker, verify a successful request, then revoke the old one. This prevents the "who broke prod?" Slack thread and reduces the blast radius of a leaked credential.

Hitting Clay API limits? See how Bitscale handles enrichment at scale without throttling headaches.

Clay API Limits: Rate Limits, Concurrency, and Hidden Ceilings

Three terms get conflated constantly. A rate limit caps how many requests you can make per unit of time. A concurrency limit caps how many requests can be in-flight simultaneously. A quota caps total usage over a billing period. Clay allows users to configure rate limits for HTTP API enrichments by specifying the number of requests and the duration in milliseconds. That configurability is useful, but it can also create self-inflicted bottlenecks if your settings are too conservative, or trigger 429 errors if they're too aggressive.

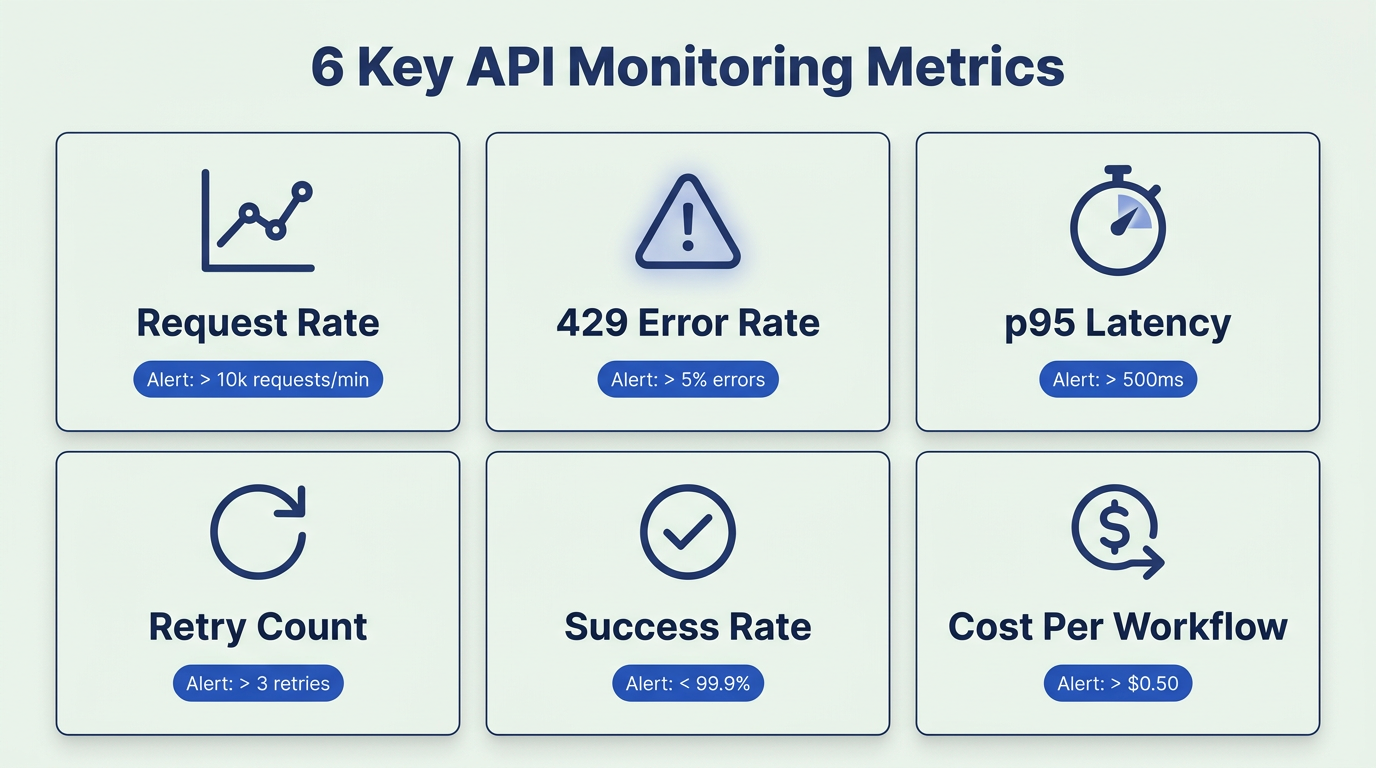

Here is how limits show up in production:- 429 Too Many Requests: you exceeded a rate or concurrency ceiling (often includes a Retry-After header).- Partial failures: your dataset ends up half-complete, with some rows enriched and others errored.- "It worked yesterday" incidents: shared limits across workflows mean a new batch job can starve real-time enrichment.- 5xx or timeouts: upstream instability, not your fault, but your integration still needs safe retries and fallbacks.

The scaling trap most teams miss: one user action can fan out into multiple API calls. A single form fill can trigger company lookup, email verification, phone enrichment, and AI research. One "record" might consume five or more API requests. If your rate-limit math only accounts for records and not calls, you will hit ceilings earlier than expected. When your numbers do not match, check your account settings and reach out to Clay support with specific request IDs.

What Most Teams Get Wrong About Rate Limits

The most common mistake is treating a 429 as an error instead of a scheduling signal. A 429 means "slow down," not "something is broken." The second mistake is retrying instantly, or retrying in parallel. Naive retries amplify throttling: ten workers all getting 429s and retrying simultaneously just increases pressure on the same ceiling.

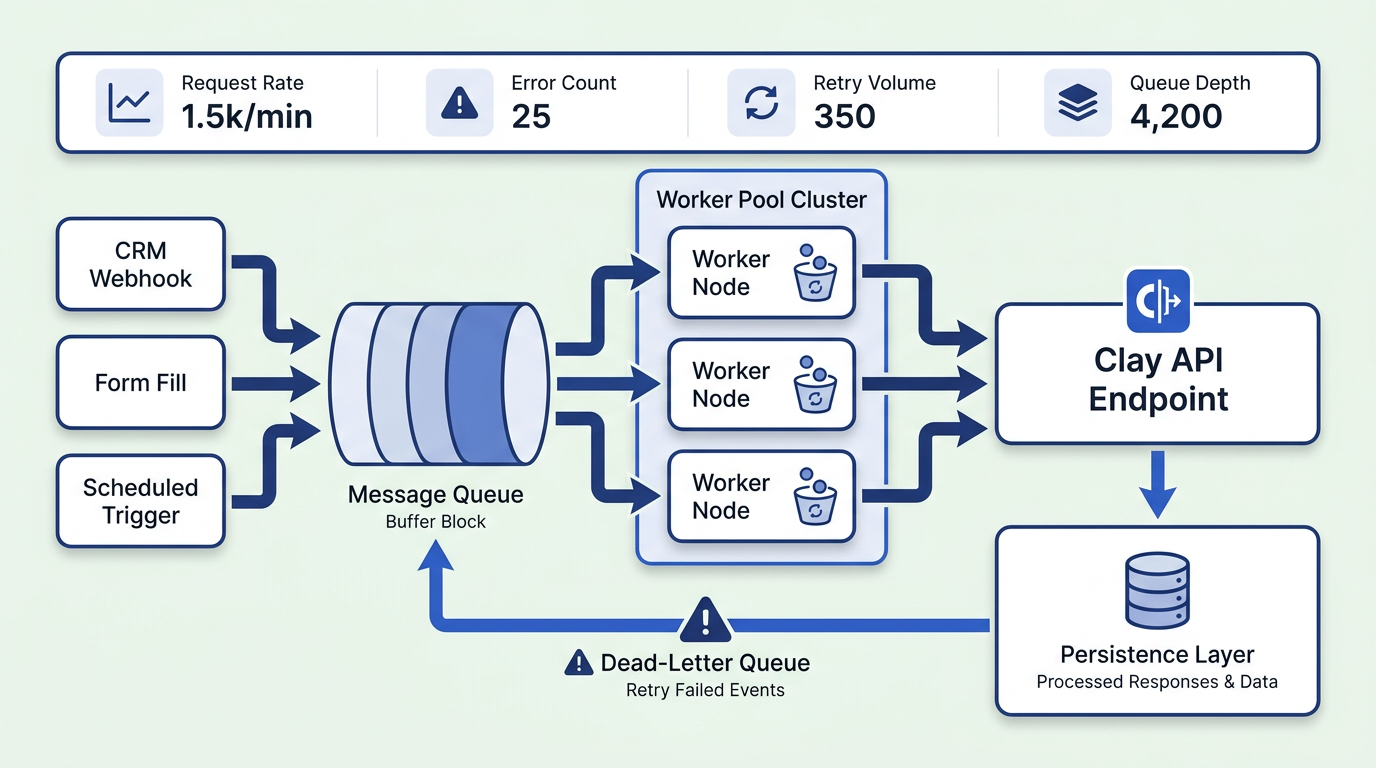

Teams also fail to separate interactive traffic (a sales rep clicking "enrich this lead") from batch traffic (a nightly CRM sync). Both share the same rate-limit pool unless you architect around it. Without idempotency, retries create duplicate records and messy CRM writes. If your integration does not produce the same result when the same request is sent twice, you have a data integrity problem waiting to happen.Operational checklist for GTM teams:- Classify traffic: interactive, near-real-time, batch.- Set SLOs: what is acceptable latency for each class.- Protect interactive flows: reserve capacity so batch jobs cannot starve them.- Make writes idempotent: dedupe keys, upserts, and stable fingerprints.

Integration Patterns That Scale Under Clay API Limits

The queue-first pattern absorbs bursts and prevents 429 cascades.

Exponential Backoff with Jitter

On a 429, wait before retrying. Start with a base delay (for example, 1 second), double it on each subsequent failure, and add random jitter (0 to 50% of the delay) so multiple workers do not retry in lockstep. Cap your maximum retry window at 60 to 120 seconds for enrichment workloads. After five consecutive failures, stop retrying that request and route it to a dead-letter queue. Alert a human with the request ID, status code, and timestamp so debugging takes minutes, not hours.

Client-Side Token Bucket Throttling

Do not wait for Clay to tell you to slow down. Implement a token bucket on your side that matches (or slightly undercuts) your configured rate limit. If Clay allows 10 requests per second, set your bucket to 8. This buffer absorbs timing variance and keeps you below the threshold. If your traffic is not uniform, use separate buckets per workflow. Teams that skip this step often see 429 spikes at the top of every minute when cron jobs fire simultaneously.

Caching Enrichment Results (and When It's Dangerous)

Cache by entity ID plus timestamp. If you enriched a company's headcount yesterday, you probably do not need to re-enrich it today. Set TTLs by field type: work emails can be cached for 7 to 14 days, job titles for 30 days, headcount for 60 to 90 days. Stale data in fast-moving fields (like a VP who just changed roles) is worse than no data. You can also control API usage by setting a maximum output length in your model configuration for AI enrichments, which reduces unnecessary credit consumption.Practical caching guardrails:- Cache only stable fields unless you have a refresh trigger.- Tag cached values with "last verified" so sales and marketing know what is fresh.- Bypass cache on high-intent events (demo request, pricing page conversion) when accuracy matters most.

Compare Clay's enrichment approach with Bitscale's built-in workflows and data stack.

Clay API Pricing: Where Limits and Costs Collide

Pricing belongs in a limits discussion because throttling changes throughput, and throughput changes spend. If your integration retries aggressively, each retry consumes credits. A nightly CRM sync that re-enriches unchanged records can silently eat your monthly budget. For a closer look at how recent Clay pricing changes and action limits affect teams, that breakdown is worth reading.

Cost-control levers worth implementing:

- Progressive enrichment: run cheap lookups first, expensive AI research only on qualified records.

- Sampling: enrich 10% of a list to validate quality before committing the full run.

- Hard caps per workflow run: set per-workspace and per-workflow budget limits so a single runaway job cannot drain your account.

- Spike alerts: if spend exceeds 3x your daily average, trigger a notification immediately.

- Reliability as a pricing pattern: caching, change detection, and idempotency are not just engineering hygiene. They directly reduce credit waste.

Advanced Edge Cases You Only Notice at Scale

"Just add more workers" hits a wall when concurrency ceilings are shared across your workspace. Ten workers each making 5 concurrent requests means 50 in-flight calls, which can exceed your plan's concurrency limit even if your per-second rate is fine. Multi-workspace setups isolate workloads by team or region, but each workspace needs its own token and its own rate-limit configuration. For teams evaluating whether Clay's architecture fits their scale, exploring best Clay alternatives can provide useful context.

Partial failure handling is where most integrations quietly accumulate data debt. When a batch of 100 records returns 80 successes and 20 failures, you need a resume mechanism that retries only the failures without re-processing the successes. Use stable request fingerprints (entity ID + enrichment type + date) so replays are safe. Run a daily reconciliation job that compares expected vs. actual enrichment counts and flags discrepancies. Teams can go months without noticing a double-digit failure rate because nobody built this check.A simple reconciliation template:- Expected: rows submitted, calls attempted, credits expected.- Actual: rows enriched, calls succeeded, credits consumed.- Delta: failures by error code (401, 429, 5xx, timeout).- Action: retry queue size, dead-letter queue count, and top failing workflows.

These six metrics cover 90% of the signals you need to prevent production incidents.

Key Takeaways and Next Steps

Treat Clay API limits as a design constraint, not a bug to work around. Build with a queue, backoff, and idempotency as your default posture. Add cost guardrails before your first production run, not after the first surprise bill.Next steps you can implement this week:- Inventory calls, not just records: map each workflow step to the number of API requests it triggers.- Add structured logs: request IDs, status codes, retry counts, and workflow identifiers.- Load-test burst patterns: simulate form-fill spikes plus a batch job running at the same time.- Protect revenue-critical flows: reserve capacity for interactive enrichment and route batch jobs through a queue.If Clay's limits are becoming a bottleneck for your GTM workflows, Bitscale offers a Clay alternative with built-in enrichment, workflow automation, and CRM sync designed to scale without the operational overhead.

Ready to simplify your enrichment stack? Try Bitscale free and skip the rate-limit engineering.

Frequently Asked Questions

Where do I find my Clay API key (or Clay API token), and can I create separate ones for dev vs prod?

Navigate to Settings in your Clay account and locate the "API key" section under the Account tab. Clay issues keys at the workspace level, so the cleanest way to separate dev and prod is to use separate workspaces, each with its own key. This also isolates rate-limit pools and credit consumption.

What are Clay API limits and rate limits in practice, and how do I know if I'm hitting concurrency or request-per-minute caps?

Clay lets you configure rate limits by specifying requests and duration in milliseconds. If you see 429 errors with a Retry-After header, you're hitting rate limits. If requests hang or time out without a 429, you're likely hitting concurrency ceilings. Log both status codes and in-flight request counts to distinguish the two.

How should I handle 429 rate limit errors from the Clay API without creating duplicates?

Use exponential backoff with jitter, and make every request idempotent by assigning a stable request fingerprint (entity ID + enrichment type + date). Before retrying, check whether the original request already succeeded. A dead-letter queue catches requests that fail after the maximum retry window so you can process them later without duplication.

Does Clay API pricing change based on API usage, and how do I prevent runaway enrichment costs?

It depends on your plan, but usage directly affects credit consumption. Each API call, including retries, can consume credits. Prevent runaway costs with change detection (skip unchanged records), caching enrichment results with appropriate TTLs, hard caps per workflow run, and progressive enrichment that runs cheap lookups before expensive ones. For more detail on how Clay's HTTP API limits affect budgets, that analysis is worth reviewing.

What's the fastest way to debug a failing Clay API integration when the Clay API docs aren't enough?

Start with the error code. A 401 means check your key and headers. A 429 means check your rate-limit configuration and retry logic. A 5xx means the issue is upstream. Log request IDs, timestamps, and full response bodies. Reproduce the failure with a single curl request to isolate whether the problem is in your code or Clay's platform. If you're stuck, Clay University has walkthroughs, and Clay's support team can trace specific request IDs.